Social Media Algorithms Face Transparency Laws: The End of the Black Box Era

A new wave of legislation across the US, EU, and beyond is forcing social media platforms to open the black box of their recommendation algorithms — the opaque systems that determine what billions of people see, read, and engage with every day. Algorithm transparency laws represent one of the most significant regulatory interventions in the history of the internet, challenging the core business model of platforms that derive their value from proprietary systems designed to maximize user engagement. The consequences will ripple across technology, media, politics, and advertising.

Why Algorithm Transparency Matters Now

Social media algorithms determine what content reaches users from the vast pool of available posts — TikTok’s For You feed, Instagram’s Explore tab, YouTube’s recommendations sidebar, X’s For You timeline, and Facebook’s News Feed all use algorithmic ranking to decide what each user sees. These algorithms process thousands of signals (viewing history, engagement patterns, demographic data, social connections, device information, time of day) to predict which content each user is most likely to engage with, and then surface that content preferentially.

The problem isn’t personalization per se — showing users content they’re interested in is a reasonable design goal. The problem is that engagement-optimized algorithms systematically amplify content that provokes strong emotional reactions: outrage, fear, moral indignation, tribal solidarity. Research published in Science, Nature, and other peer-reviewed journals has repeatedly demonstrated that algorithmically amplified content is more inflammatory, more polarizing, and more likely to contain misinformation than chronologically sorted or randomly selected content. The algorithms don’t intend to radicalize users or spread misinformation — they’re optimizing for engagement, and emotionally provocative content generates more engagement than calm, factual content.

The real-world consequences of this dynamic include documented contributions to political polarization, the spread of health misinformation (anti-vaccine content, COVID conspiracy theories), youth mental health impacts (body image issues amplified by Instagram’s algorithm prioritizing engagement-bait content), and in extreme cases, incitement to violence (Facebook’s algorithm was found to have amplified content contributing to the Rohingya genocide in Myanmar). These consequences have generated sufficient political consensus — rare in a polarized environment — to produce bipartisan and international legislative action.

The EU’s Digital Services Act: The Gold Standard

The European Union’s Digital Services Act (DSA), fully enforced since February 2024, establishes the most comprehensive algorithm transparency requirements in the world. Very Large Online Platforms (VLOPs) — platforms with more than 45 million monthly active users in the EU — must provide users with “meaningful and actionable explanations” of how recommendation algorithms work, including the main parameters used in algorithmic ranking and how they can be modified by the user.

The DSA requires platforms to offer at least one recommendation option that is “not based on profiling” — meaning users must be able to see a chronological feed or a feed ranked by non-personalized criteria rather than the algorithmically curated default. Instagram, TikTok, and Facebook have all added chronological feed options in response to this requirement, though the implementations vary in prominence and usability. Platforms consistently report that fewer than 10% of users switch to chronological feeds when given the option — a finding that platforms cite as evidence that users prefer algorithmic feeds, and that critics cite as evidence that switching mechanisms are not prominent enough.

Annual risk assessments are another DSA requirement. Platforms must conduct and publish assessments of the systemic risks their algorithms create — including risks to fundamental rights, electoral processes, public health, and child safety — and describe the mitigation measures they’ve implemented. These assessments are audited by independent researchers who have access to the platform’s data through a structured data access program. The European Commission has designated several academic institutions as “vetted researchers” authorized to study platform algorithms.

The enforcement mechanism is significant: the DSA allows fines of up to 6% of global annual turnover for violations. For a company like Meta (with $135 billion in 2025 revenue), that’s a potential fine of $8 billion — enough to make algorithm transparency a board-level compliance priority rather than a PR exercise.

US Legislative Efforts

The United States lacks a comprehensive federal algorithm transparency law, but multiple bills are advancing and several states have enacted their own requirements. The most significant federal proposals include the Platform Accountability and Transparency Act (PATA), which would require platforms to provide data access to researchers studying algorithmic impact; the KIDS Online Safety Act (KOSA), which would require platforms to disable algorithmic amplification for users under 18 by default; and the Filter Bubble Transparency Act, which would require platforms to offer non-algorithmic content ranking options.

KOSA has generated the most bipartisan momentum, driven by widespread concern about social media’s impact on youth mental health. The bill would require platforms to use the “highest level of privacy” for users under 18, disable algorithmic features that could harm minors (including infinite scroll, autoplay, and engagement-optimized recommendations), and provide parents with tools to supervise their children’s social media experience. Multiple states have passed similar youth-focused social media laws, though several have been challenged on First Amendment grounds.

State-level action has been faster than federal. California’s Age-Appropriate Design Code Act, which went into effect in 2024, requires platforms to consider the best interests of children in their design decisions, including algorithmic recommendations. Utah, Texas, Louisiana, and several other states have enacted laws restricting minors’ access to social media, with varying requirements for age verification and algorithmic safeguards. The patchwork of state laws creates compliance complexity for platforms but also creates pressure for a federal standard that would preempt conflicting state requirements.

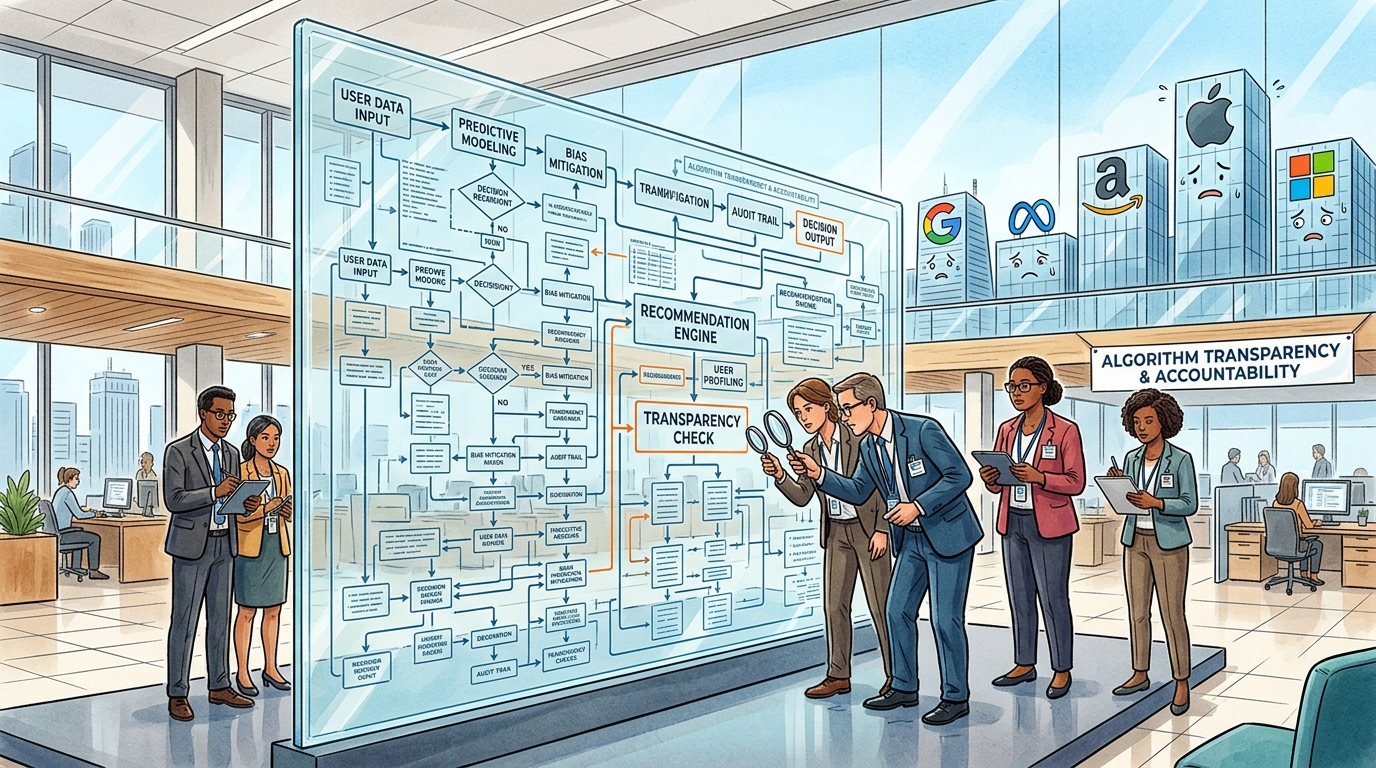

What Platforms Must Actually Reveal

The practical question is what algorithm transparency actually means in implementation. Platforms argue that publishing their recommendation algorithm source code would expose trade secrets, enable gaming of the system (bad actors could optimize content to exploit algorithmic preferences), and provide competitors with proprietary technology. Regulators generally accept these concerns and don’t require source code disclosure — instead, they require functional transparency: explanations of what factors the algorithm considers, how those factors are weighted, and what impact the algorithm has on the content users see.

Several categories of disclosure are emerging as regulatory standards: input transparency (what data about the user is used to personalize recommendations — viewing history, location, demographic inferences, social connections); factor transparency (what content characteristics are used in ranking — engagement predictions, recency, source authority, topic category); outcome transparency (aggregate statistics about algorithmic impact — what percentage of news feed impressions are from followed accounts versus algorithmically recommended accounts, how content reach varies by topic category); and option transparency (what controls users have to modify algorithmic behavior — turning off personalization, adjusting topic preferences, reducing recommendations from specific categories).

Researcher access is the most contested area. Independent researchers argue that meaningful algorithm accountability requires access to platform data at a level that user-facing transparency options cannot provide. They need to analyze how specific types of content (political, health-related, inflammatory) are amplified or suppressed by algorithms across millions of users — research that requires access to recommendation logs, A/B testing results, and internal metrics that platforms consider highly proprietary. The DSA’s vetted researcher framework provides structured access, but researchers report that the process is slow, access is limited, and platforms are not always cooperative despite legal obligations.

Platform Responses and Resistance

Platform responses to transparency requirements range from grudging compliance to proactive transparency. Meta publishes a “Transparency Center” with documentation about how its recommendation systems work, quarterly reports on content moderation actions, and an API that provides aggregate data to researchers. TikTok launched “Project Clover” in Europe, housing European user data and algorithmic operations in European data centers with third-party oversight. YouTube publishes an annual recommendations report describing how its algorithm handles borderline content (content that doesn’t violate policies but is low quality or potentially harmful).

Behind the compliance, platforms are actively lobbying against more aggressive transparency requirements. Industry groups including NetChoice (representing Meta, Google, Amazon, and others) have filed legal challenges against state social media laws on First Amendment grounds, arguing that algorithmic curation is a form of editorial speech protected by the First Amendment — and that requiring the government to dictate how platforms curate content violates the First Amendment prohibition on compelled speech. Two Supreme Court cases (Moody v. NetChoice and NetChoice v. Paxton) have addressed state laws regulating platform content moderation, though the holdings have been narrow and the fundamental constitutional questions remain unresolved.

The technical challenge of transparency shouldn’t be understated. Modern recommendation systems are not simple rule-based algorithms — they’re machine learning models with billions of parameters that were trained on vast datasets and produce outputs that are difficult to explain even for the engineers who built them. A neural network that predicts which TikTok video a user will watch next considers thousands of features through a mathematical process that doesn’t decompose neatly into human-readable rules. Meaningful transparency requires not just disclosure but interpretation — translating the model’s behavior into explanations that regulators and users can understand and act on.

The Impact on the Advertising Business

Algorithm transparency has significant implications for digital advertising because the same algorithms that recommend content also target advertisements. If platforms must disclose how they personalize content recommendations, the logical extension is disclosure of how they personalize ad targeting — what data they use, what categories of user profiles they create, and how ads are matched to users. The DSA already requires this for EU users: platforms must provide transparency about why a specific ad was shown and what targeting criteria the advertiser used.

Advertisers have mixed feelings about algorithm transparency. Brand safety teams welcome the ability to understand how and where their ads appear, reducing the risk of association with harmful content. But advertising effectiveness often depends on opaque optimization — platforms’ ability to find and target receptive users through mechanisms that the advertiser doesn’t fully understand or control. If transparency requirements limit the sophistication of algorithmic ad targeting, advertising effectiveness (and platform ad revenue) could decrease.

Looking Forward

Algorithm transparency laws are still in their early stages, with enforcement evolving and legal challenges ongoing. The trajectory is clearly toward more transparency rather than less — no political constituency is arguing that algorithms should be less transparent than they are today. The open questions are how deep the transparency goes (surface-level user controls versus deep researcher access to algorithmic internals), how it’s enforced (self-reporting versus independent auditing versus third-party testing), and how constitutional protections interact with regulatory requirements in the US specifically.

The endgame is probably not complete algorithmic openness (which would be both commercially untenable and technically impractical) but a regulated transparency regime similar to financial regulation: platforms operate proprietary systems but disclose specified information to users and regulators, submit to independent audits, and face consequences when their systems produce documented harms. The algorithmic black box isn’t opening completely, but it’s no longer completely closed — and the era of platforms operating engagement-optimized algorithms with zero public accountability is definitively ending.

Related articles: Fintech Super Apps Dominate Emerging Mar | Neuromorphic Computing: Brain-Inspired C | 3D Bioprinting in 2026: From Lab Curiosi